Nowadays businesses are hiring data scientists in droves to make rigorous, scientific, unbiased, data-driven decisions. The data is huge, therefore the biggest challenge is to build faster and scalable systems for prediction and decision making engines.

Without faster and scalable decision-making engine business will not be able to create data driven business models

There are situations where business needs real time predictions for daily trained models. One of the most popular high velocity framework is Play Framework. It allows to build web applications which follow the model-view-controller (MVC) architectural pattern. Apache Spark is a lightning-fast cluster computing engine that supports well matured many distributed machine learning algorithms.

What if we can run Apache Spark on a local machine along with Play Framework controllers?

If we can manage to run Apache Spark application along with Play application we can leverage Spark ML algorithms for real time predictions.

In my recent work I had to setup play with spark and I faced multiple dependency issues in Akka and guava versions. Therefore I have created a starter kit for anyone who is interested in working with play-spark.

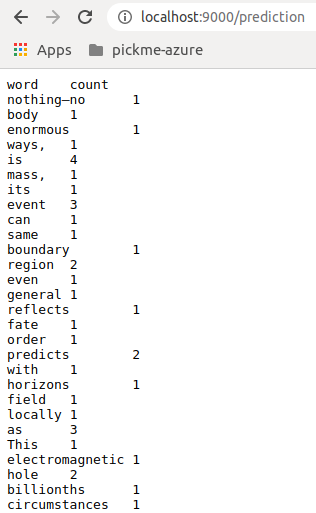

In this starter kit you will find an API where it will count the words on top of spark context and the result will be returned as response (http://localhost:9000/prediction).

In this example it includes, Spark Model loading guice module where it creates a Spark Context/Spark Session as singleton object during the Application startup time.

By following this example we can integrate any complex Spark ML models to provide real time prediction.

.

├── app

│ ├── controllers

│ │ └── HomeController.java

│ ├── guice

│ │ └── module

│ │ └── MLLibModule.java --> Machine Learning guice module

│ ├── ml

│ │ └── ModelPrediction.scala --> Model prediction class (word count example)

│ ├── response

│ ├── startup

│ │ └── AppLoader.java --> App loader module

.

.Starter Kit: Play-With-Spark